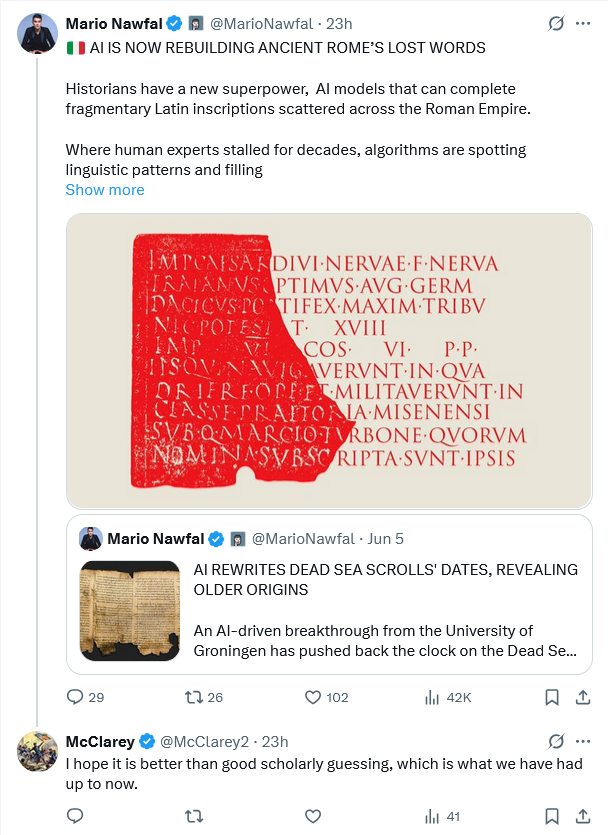

No, but it could be a useful tool. It should be rigorously tested with complete texts with half or more taken away to see how the AI performs. It would still be speculation, but perhaps better speculation than most specialists can do on their own, but we are in an area which is much art as science.

I have been studying Latin as a hobby since high school in 1976 and I cannot do what AI is purported to be able to do. Donald’s recommendation is correct and one that I wrote (albeit in different words) in the governance plan on AI at Neutrons ‘R Us:

“It should be rigorously tested with complete texts with half or more taken away to see how the AI performs.”

100% Yes!

Because some of our customers are international, translating texts written in foreign languages by the nuclear regulators in those countries is an issue to contend with, and already I have found that AI translation sometimes misses the mark. I cannot give details here in a public forum, however. BTW, that governance plan finally got approved; all the senior level people signed it, so now they’re committed to the prescribed measures for protection and mitigation, even Donald’s counterpart. I wonder if there are any more honest lawyers like Donald left who place the law first instead of trying to sleaze their way to corporate greatness.

Prediction: there will be weeping, wailing, and gnashing of teeth when AI advocates discover that a formal documented risk assessment based on likelihood and consequences of adverse event (harm) is required in order to use AI for anything affecting quality or safety or adherence to regulations, and that an independent verification of AI results by an alternate methodology must be performed.

Mea sententia peculiaris sicut civis liber.

My personal opinion as a free citizen.

When I think of AI, all I have to do is remember the AI generated description of Snoopy dressed as a WWI flying ace at Schroeder’s Halloween party.

Don & LQC are correct that there should be rigorous tests for the AI completion of texts.

But there won’t be.

It is almost as though AI appeared on the scene just as the expert-ocracy had discredited itself into diminished relevance. AI claims to fix all the problems with the expert class. AI claims to be nonpartisan, wise, competent, and benevolent.

We will find that AI is none of these, just as the expert-ocracy is none of these.

The question is, *when* will we find out…

Excellent comments by all so far, And I totally agree. Very important to keep commonsense and not fall into the trendy trap of AI knows everything.” Garbage in garbage out still applies. So does “watch who is counting the votes.”

We can’t be sure AI gives correct accurate information. Until we discern and scrutinise the source of the information, we cannot conclude the information to be correct. Discernment and scrutiny is a human ability. AI cannot do this.

The Romans were a legal-minded people, superstitiously-ritualistic and stuck on formulaic language in such dedications, so I would think a well-instructed AI system could work well for ancient Latin (or ancient Egyptian, whose pharaohs loved their formulas, too). Would it work as well for texts of the freer-thinking and more poetic Greeks, I wonder?